This computer test determines whether the user of the system is a human or a computer. If the number of requests to the site exceeds the threshold value, protection is activated, and the user must enter information from CAPTCHA to prove that they are not part of a botnet, and only then will they be granted access to the requested resource.

The CAPTCHA test itself is well known, but its history has some interesting facts that are worth mentioning.

The amazing history of CAPTCHA

The word CAPTCHA is an acronym for “Completely Automatic Public Turing Test to Tell Computers and Humans Apart.” It sets a task that a human can solve quite easily, but artificial intelligence (a computer or bot) cannot or can hardly solve.

The original system for such verification was developed in the early 2000s by engineers at Carnegie Mellon University (a private university and research center located in Pittsburgh, Pennsylvania, USA). The team, led by Luis von Ahn (better known as Big Lou), was looking for a way to filter out multiple automatic registrations and messages from robot programs (spam bots) that pretended to be humans.

The team developed a program that displayed a form with text so distorted that humans could understand it, but computer bots could not. Anyone who encountered such a check had to enter the correct text in the field before they could submit the questionnaire or write a message.

The program was so successful that it began to be actively used around the world.

Unfortunately, the engineers forgot about one very important human trait: the desire to make money. Soon, an additional way of earning money appeared on the Internet: solving CAPTCHA tasks, which began to be sponsored by spammers who generated spam, floods, and account hacks. This type of income became especially popular in poor countries, where the opportunity to earn even a small amount of money for thousands of CAPTCHA solutions was very attractive.

Despite this, the service remained very popular. Its developers were tormented by only one thought – the thought that they were forcing millions of people to senselessly convert images into text, wasting their time on manipulations that served no purpose other than protection.

In an interview with The New York Times in 2011, Von An pondered, “Can something useful be done with this time?”

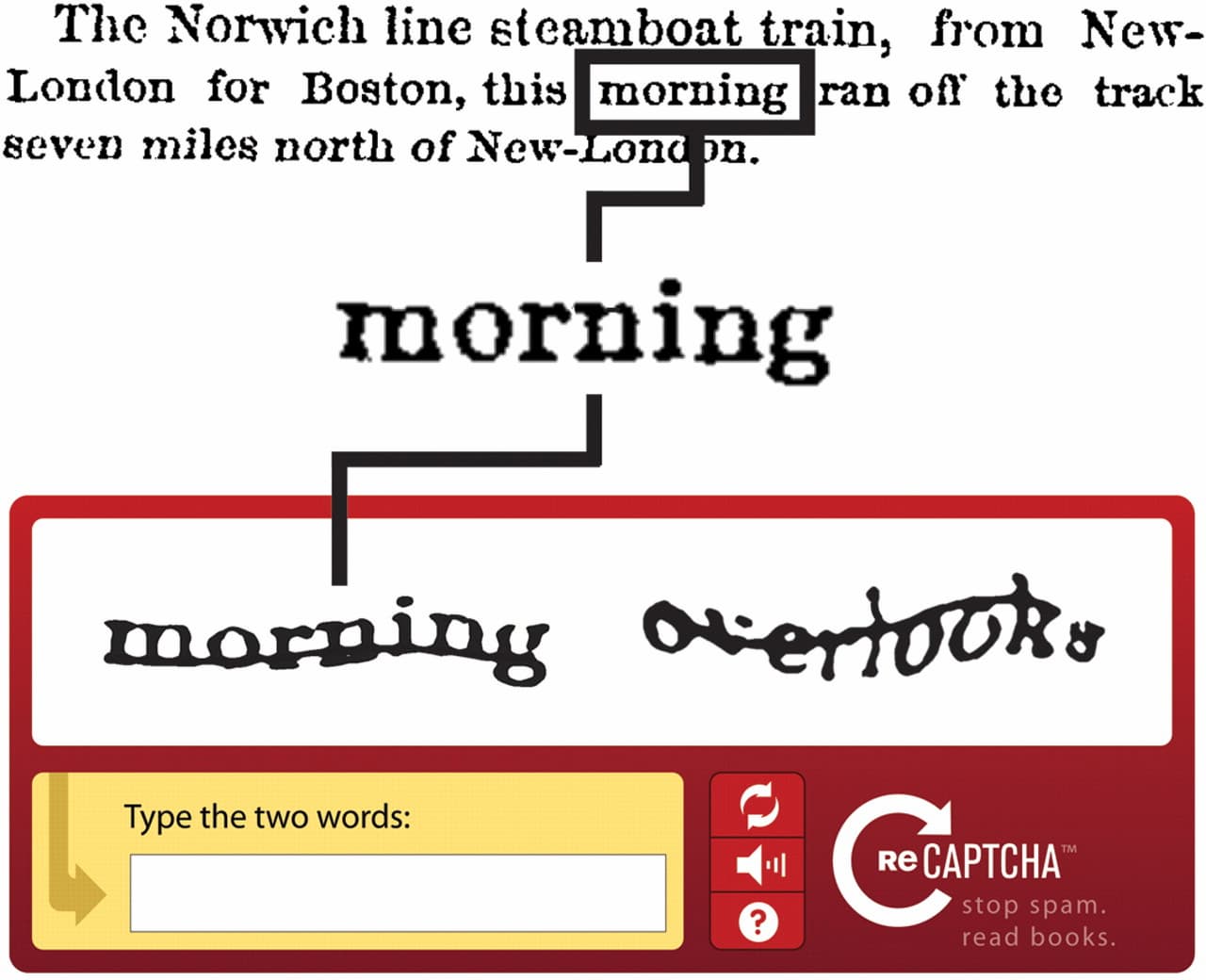

After some time, a new version was introduced – the reCAPTCHA application, which was also based on the need to enter text from an image into a form, but instead of a random set of letters and numbers, the user now had to decipher real text from archival documents. The software is quite capable of recognizing printed text, but over time, the ink fades and the computer can no longer identify certain words, whereas humans can handle this task well.

First, archived issues of The New York Times were subjected to recognition, and then, after the service was sold to Google in 2009, old books were deciphered. It turns out that every time you entered text with reCAPTCHA, you were doing free work for one of these companies, and the illegible words and expressions that made no sense were actually fragments from archival tests.

Of course, not every user was happy with their “free” work for Google, but reCAPTCHA still remained the most effective way to combat bots. Von An was very pleased with the new version of the program, assuring that the service would live on for a very long time, and that there was plenty of printed material for recognition in the archives.

However, in this age of high technology and widespread, comprehensive development of the internet, what seems modern and progressive today may cease to exist in a few years. The same could happen to CAPTCHA.

In 2014, Google analysts discovered that artificial intelligence could recognize and crack even the most complex CAPTCHA and reCAPTCHA images with 99.8% accuracy, which once again rendered these services virtually useless for protecting against Internet bots.

To solve this problem, Google began to improve the technology, and in the spring of 2012, an experiment was launched to recognize images from Google Maps and Google Street View, fragments of which contained task numbers.

In early 2015, developers introduced a new system that does not rely on the user’s ability to recognize words or numbers from an image, but analyzes their behavior on the website page until they click the verification checkbox. All you need to do is click the “I am not a robot” button and continue working.

The algorithm analyzes the user’s actions and, based on the data obtained, concludes whether it is a human or a bot. If the analysis does not give a clear answer, the user may be asked to undergo an additional verification, for example, to select only those pictures with cats from several pictures with animals.

The “arms race” between security specialists and spammers will never end. New protection mechanisms will continue to be developed, and new intelligent ways to circumvent them will continue to be found.

Currently, reCAPTCHA technology is one of the most reliable ways to combat botnets and is successfully used in the Stingray Service Gateway to protect against DDoS attacks, while the constant development of the platform and the release of new versions allow the use of up-to-date protection mechanisms.