To ensure that the network operates predictably and users receive high-quality service, traffic classification is used.

What is traffic classification and why does an operator need it?

Traffic classification is a way to “mark” all packets traveling across the network and decide which ones are more important and which ones can be postponed. This mechanism allows the operator to see what data is passing through the network and manage it according to priority. This is especially important at peak times: routers pass packets from critical applications faster, reducing packet loss and jitter, while less important traffic is temporarily restricted. Without this approach, all traffic would be treated equally, and, for example, a Zoom call could be slowed down because someone is downloading a torrent.

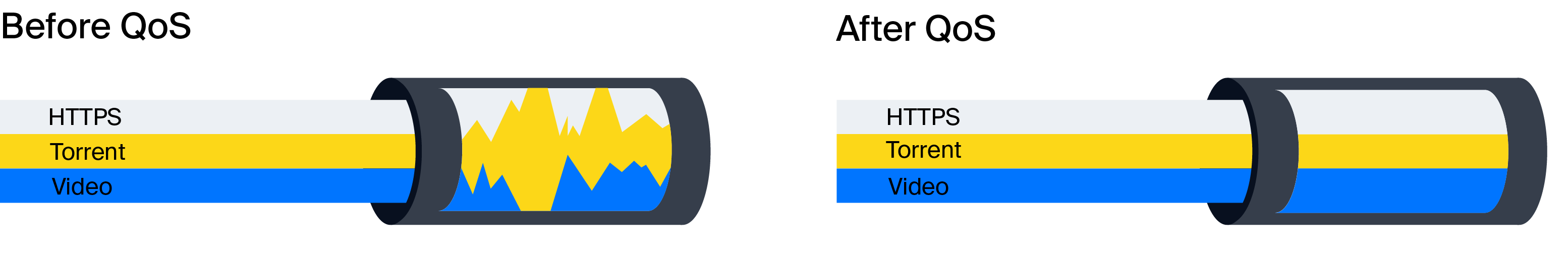

Another key task is ensuring quality of service (QoS). With classification, operators can increase the priority of critical services while reducing the priority of background traffic, such as large file downloads or P2P.

QoS management options on a common channel:

- Fixed bandwidth limitation for a group of protocols

In this scenario, a fixed portion of the channel is allocated to certain traffic classes so that priority services always have sufficient bandwidth. For example, you can set a rule that torrents occupy no more than 20% of the channel, freeing up resources for HTTPS or video streaming.

- Peak load control with low-priority traffic displacement

When total traffic approaches the limit, the network device begins to drop or delay packets of low-priority classes to ensure stable operation of critical applications. This method “smooths out” peaks and allows the upper limit to be set 10-15% below the nominal channel bandwidth, with users hardly noticing any restrictions.

What are the different types of traffic classification?

There are various approaches to determining how a network should handle a specific data packet.

One of them is classification by header fields. Mechanisms such as CoS (Class of Service) for VLAN headers and DSCP (Differentiated Services Code Point) for IP headers are used here. Switches and routers analyze the service fields in frame and packet headers and use this data to decide how to process the traffic. In simpler terms, DSCP is a system of conditional “color tags” for packets that all network devices understand.

In the case of CoS, priority is set by three bits in an Ethernet frame. They allow eight levels to be distinguished — from the most common traffic to routing protocol control messages.

DSCP uses six bits in the IP header and provides 64 possible priority values. This technology is considered more advanced and is most commonly used today. It is also called DiffServ, and we will discuss how it works in more detail below.

DPI allows traffic to be classified by signatures. Signatures are a set of characteristic features that can be used to recognize a specific service or application. This can be a unique sequence of bytes, a string in a protocol, a port, a domain name in TLS/SNI, or even specific source and destination IP addresses. Essentially, the device “looks inside” the packet and compares it to a rule base to understand which application sent it.

The main classification technology: DiffServ

DiffServ is the most flexible and scalable method of ensuring QoS (RFC 2474 and RFC 2475). Its essence is that each packet receives a DSCP label, and all network devices along the way look at it and decide whether to accelerate, delay, or even discard the packet.

The key concept here is Per-Hop Behavior (PHB): each device in the network independently classifies incoming packets and applies policies to them based on the DSCP value in the IP header. Roughly speaking, PHB specifies what to do with a packet at network nodes: forward with priority, delay, drop, and so on.

Each node follows the same rules that map DSCP values to traffic classes (this is done to maintain network consistency). Special markings are used for this purpose—the priority value is written to the IP, MPLS, and 802.1q fields of the packet header. Subsequent nodes can use this label so they don’t have to reanalyze the packet contents.

According to RFC standards, there are four PHB models within DiffServ:

- Default/Best Effort (BE). As the name suggests, the device will do its best to transmit the packet, but does not provide any guarantees, since all traffic is processed uniformly without prioritization. For this class, the DSCP value is set to 000000. The mechanism also applies to all packets that have not been classified. Note that there is a variation of this PHB – Lower Effort, which is not standardized and can be used for traffic that is even lower in priority than Best Effort.

- Class Selector (CS). This model is responsible for class-based prioritization. Packets with a higher class must be processed and transmitted with less delay than packets with a lower class. The priority level is determined by the three most significant bits of the DSCP code – there are eight classes in total (from CS0 to CS7). At the same time, bits 2–4 are set to 0.

- Assured Forwarding (AF). The model implements the full functionality of DSCP. It allows you to assign a traffic service class and determine the probability of packet discard within it, for example, when the channel is overloaded. The DSCP code structure for AF is aaa dd 0, where:

- aaa is the class number (AF1, AF2, AF3, AF4);

- dd is the probability of discarding.

The model is suitable for streaming and video communication (RFC 2597).

- Expedited Forwarding (EF). Designated by DSCP code 101110. These packets receive the highest priority, the shortest queue, and the probability of their loss is minimal. In essence, this is an emergency forwarding model for traffic that is critical to delays, jitter, and drops. It is often used for voice communication, VoIP, and remote access (RFC 2598).

Traffic classification in Stingray DPI

In Stingray DPI solution, the operator can not only distribute packets by traffic classes, but also flexibly manage the speeds of specific sessions. For this purpose, a separate session policing service is provided: it allows you to set the speed for a specific connection, as well as override traffic classes. The configuration is performed through a file describing the classes, where each packet is assigned a DSCP value. The label can be specified in different formats – numerical (decimal, hexadecimal, or octal) or using a text abbreviation.

Further, the entire logic of operation is built around two marking scenarios:

- Without marking – traffic is classified only inside the device, but retains its original labels at the output. Eight classes are used for this.

- With marking – packets receive new DSCP values at the output, which are specified in the settings. All 64 classes can be used here.

Let’s look at an example of prioritization based on the IP header. For DSCP, 8 bits are allocated (2 are reserved), leaving 6 working bits, which gives 64 possible combinations. In a simplified scheme, only the 3 most significant bits are often used, i.e., a total of 8 classes (from CS0 to CS7). Specific protocols and services can be assigned to each class. For example:

| CS0 | CS1 | CS2 | CS3 | CS4 | CS5 | CS6 | CS7 |

| DNS, ICMP | HTTP, HTTPS, QUIC | Unoccupied | Default (all other traffic) | Viber, WhatsApp, SIP | AS local IP, peering | TCP unknown | BitTorrent |

By combining these classes, operators can build tariff plans for different scenarios. For gamers, there is a profile with ICMP priority, while for corporate clients, the focus is on messengers and VoIP.

To sum up

Traffic classification is the foundation on which predictable and manageable network operation is built. In Stingray, it is implemented through packet marking, DSCP usage, flexible service classes, and policing. All this allows the operator to fine-tune priorities and speeds for specific scenarios: whether it’s premium packets for gamers, stable profiles for corporate clients, or protecting channels from overload due to background downloads.

This approach not only helps improve the quality of services for users, but also makes more efficient use of network resources. As a result, the operator gets a tool that simultaneously improves the customer experience and reduces infrastructure development costs.